Report: 2022 Family Diplomacy Training Impact

From July 10 to October 30 this year, Learning Life successfully held our first Family Diplomacy Initiative (FDI) training for family diplomats (FDs). This report details our process and results in measuring the impact of the training on participating FDs.

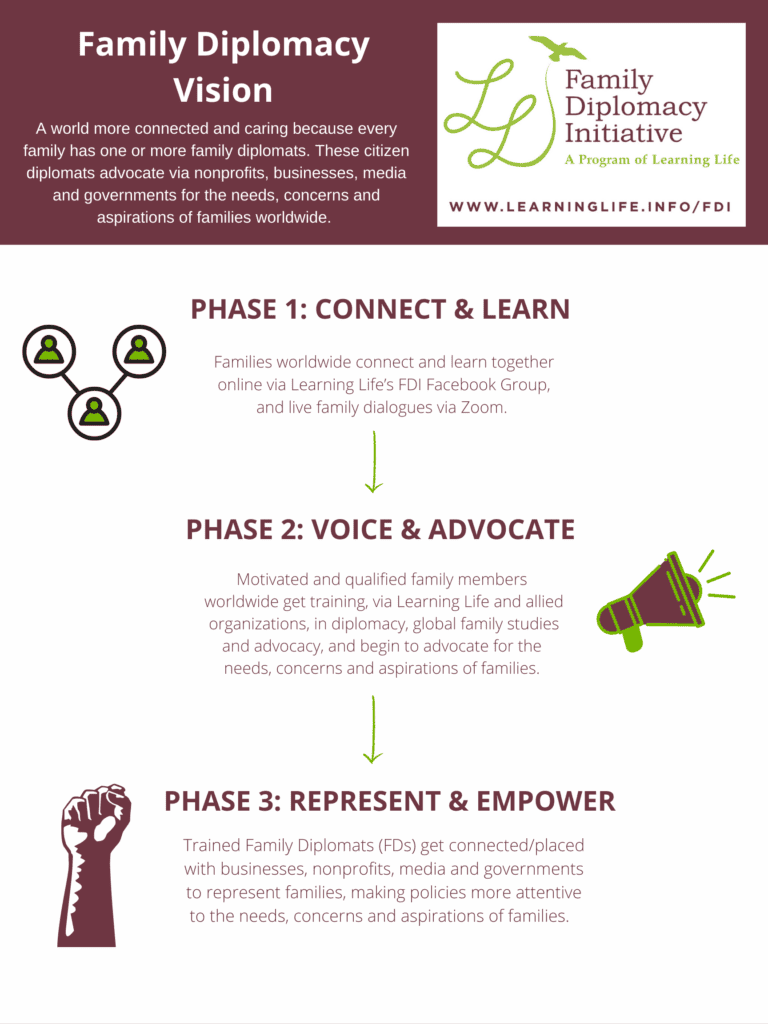

The FD training marks the beginning of FDI Phase 2: training a growing corps of volunteers worldwide to be family diplomats capable of effectively advocating for families that share similar challenges, from depression, disability or discrimination, to displacement, war, or climate change. See the “Family Diplomacy Vision” poster below for more about the three planned phases of FDI’s development.

Launched in 2016, FDI is an ambitious, long-term, grassroots effort to connect, train and empower a growing international corps of family diplomats to participate in decision-making at local to global levels. We envision a world more connected and caring because every family has one or more family diplomats, and those citizen diplomats advocate effectively via nonprofits, businesses, media and governments for the needs, concerns and aspirations of families worldwide. From 2016 to 2021, Learning Life worked on FDI Phase 1, growing our international family diplomacy community on Facebook to now over 13,000 people worldwide, and conducting live, international dialogues on family issues. As we continue increasing the number of people globally connected to FDI via Facebook, Phase 2 will over the coming years develop family diplomacy training, and gradually build a corps of committed FDs in collaboration with allied nonprofits across the world. These FDs will begin advocating on issues affecting families via media, businesses, nonprofits and governments. Ultimately, as we continue Phase 1 and 2, we will, in Phase 3, begin institutionalizing the placement of well-trained FDs with collaborating media, business, nonprofit and government agencies to give meaningful voice and decision-making power to families. Thus, FDI is not a 10 or 20 year project, but rather a permanent movement to empower families for a more caring world.

About the 2022 FD Training

In 2022, the FD training consisted of two parts:

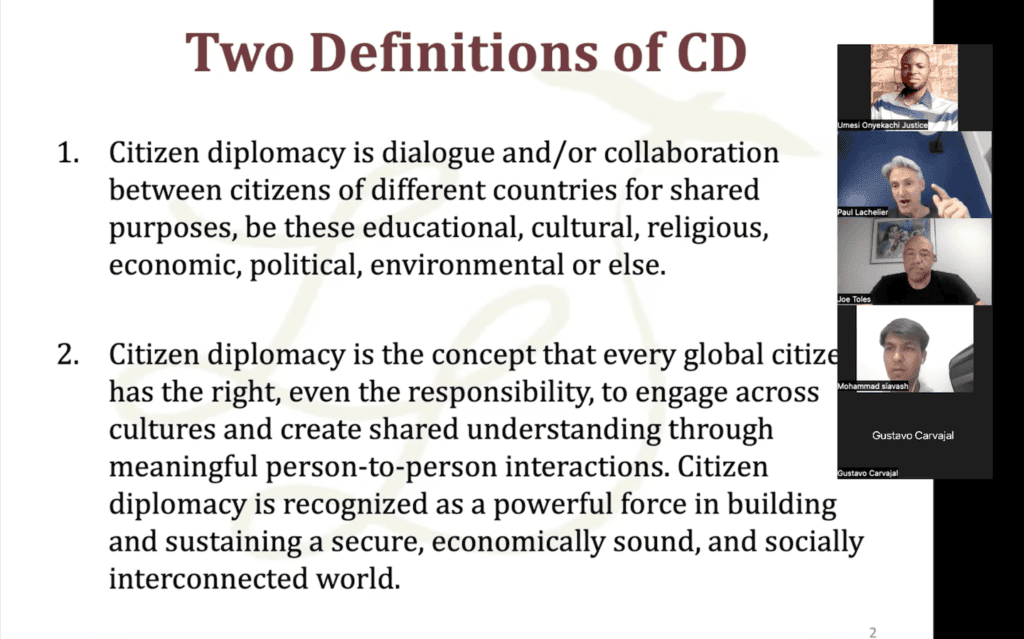

Part 1: July 10-August 21 (seven meetings): FDs learned about (a) trends, patterns and issues facing families across the world, plus (b) citizen diplomacy (i.e., citizens collaborating internationally on shared interests and issues) in order to develop their basic knowledge as family-diplomats-in-training.

Part 2: August 28-October 23 (nine meetings): FDs learned about storytelling, then each practiced and performed a family story of their own. (The ability to tell family stories effectively is one powerful way to speak to the needs, concerns and aspirations of families.)

The last meeting occurred on October 30 and focused on discussing what went well and what could be improved in the training. Click here to learn more about the FD training, including eligibility, benefits, and other details.

The first cohort of FD trainees consisted of 19 individuals from 15 countries: Costa Rica, Trinidad & Tobago, USA, Liberia, Nigeria, Ghana, Cameroon, Zimbabwe, Albania, Georgia, Afghanistan, Pakistan, India, Bangladesh and China. Seventeen completed Part 1 of the training, and seventeen completed Part 2.

Evaluation Method

For Part 1, Learning Life staff assessed the trainees’ improvement in knowledge about citizen diplomacy and global family trends, patterns and issues by comparing their answers to the same survey question before and after six weekly training meetings from July 17 to August 21. This simple, open-ended survey question was: “What do you know about citizen diplomacy, and the patterns, trends and issues facing families across the world?” In both the pre- and post-training surveys, trainees had ten minutes to type their answers to the question. Then, their pre- and post-training answers were mixed up and anonymized so the four volunteers who evaluated the trainees’ answers could not identify the persons, nor which answers were typed before or after the training. Over about two hours in one weekend meeting, Learning Life’s founder and director, Dr. Paul Lachelier, first trained the four evaluators on how to numerically score the answers using the following simple rubric, then led the evaluators through the scoring of all Part 1 pre and post-training survey answers.

Rubric: “For each survey answer, score fact density (1, 2 or 3), then coherence (1, 2, or 3), then add up the two scores (lowest score=2, highest score=6).”

| Criterion / Score | Poor (1) | Fair (2) | Good (3) |

| Fact Density | Few if any facts are offered, and/or the facts offered are incorrect. | Some facts are offered, and some of those facts are correct. | The answer offers a lot of facts, and most or all of those facts are correct. |

| Coherence | Answer is unclear, and/or sentences are not logically connected in a coherent whole. | Answer is somewhat clear, and/or sentences are somewhat logically connected in a coherent whole. | Answer is clear, and/or sentences are logically connected in a coherent whole. |

For Part 2, Learning Life staff measured the trainees’ improvement in their storytelling ability by comparing their story performances before and after five weekly story training and practice meetings from September 11 to October 9. For their pre-training stories, the trainees were instructed not to prepare but rather to just tell a family story in five minutes or less on either August 28 or September 4. Learning Life staff video recorded fourteen of the seventeen trainee pre-stories on these two days during our scheduled Sunday meetings. The remaining three trainees audio recorded their stories and uploaded them to Learning Life’s Google Drive after these dates, due to unstable video connection in the case of two trainees, and missing the August 28 and September 4 meetings in the case of the third. After the training, the seventeen trainees video or audio recorded (16 of 17 were able to video record) their stories and uploaded them, some with the assistance of Learning Life interns. Only in two of seventeen cases did evaluators compare audio pre-stories with video post-stories, the rest were “apples-to-apples,” video-to-video and (only one) audio-to-audio comparisons.

On Sunday, December 4, Dr. Lachelier organized ten Learning Life volunteers into two teams of five story evaluators, and met with each team once over about 2.5 hours to train them on the simple story-scoring rubric below. The first team scored nine trainees (nine pre-stories + nine post-stories for a total of eighteen stories), the second scored eight trainees (eight pre-stories + eight post-stories for a total of sixteen stories). Dr. Lachelier showed the teams the stories in random order, and instructed the evaluators not to worry about “figuring out which are pre-training, and which are post-training stories” but to “just focus on the stories: their clarity and power.” He also instructed them to judge audio stories based on what they could hear, and video stories based on what they could see and hear, and in all cases, not to “grade down the storyteller for their accent” as “clarity here refers to that which you are able to understand despite any accent, and whether that makes logical sense.”

After the evaluation teams scored the pre- and post-training stories, Dr. Lachelier organized and analyzed the scores. He ultimately dropped the scores of four evaluators, two on each team so that each story was scored by three evaluators. In one team it was unclear in two evaluators’ cases which scores belonged to which trainees, so Dr. Lachelier randomly dropped two evaluators’ scores from the second team. The evaluators whose scores were used did not know the trainees, and hence could not be biased due to familiarity. However, the evaluators’ scores could have been biased by their perception of the voices or appearance of trainees since they could see and/or hear the trainees’ story performances via the audio or video recordings.

Rubric: “For each story, score clarity 1, 2 or 3, then power 1, 2, or 3, then add up the two scores (lowest score=2, highest score=6).”

| Criterion / Score | Poor (1) | Fair (2) | Good (3) |

| Clarity | The story wasn’t clear overall. | The story wasn’t clear in some parts. | The story was clear throughout. |

| Power | The story was not delivered in an emotionally moving, thought provoking, or captivating way. | The story was delivered in a way that was sometimes emotionally moving, thought provoking, or captivating. | The story was overall delivered in a way that was emotionally moving, thought provoking, or captivating. |

Evaluation Results & Discussion

The seventeen trainees who completed Part 1 improved their knowledge scores by 11% on average, from 3.8 out of 6 points prior to the training, to 4.2 out of 6 after the training. Eleven of the seventeen trainees improved their scores, six had worse scores. The eleven who improved had a 1.09 out of 6 or 30% average score increase, while the six whose scores worsened had a 0.88 out of 6 or 22% average score decrease.

The seventeen trainees who completed Part 2 improved their storytelling scores by 24% on average, from 4.08 out of 6 points prior to the training, to 5.06 out of 6 after the training. Fifteen of the seventeen trainees improved their scores, one had a worse score, and one had no change in score. The fifteen who improved had a 1.16 out of 6 or 29% average score increase, while the one whose score worsened had a 0.66 or 15% score decrease.

The trainees’ Part 1 essay answers in many cases contained more expressions of opinions than demonstrations of knowledge. Accordingly, Part 1 knowledge scores may improve more overall if we provide trainees, before their pre- and post-surveys, specific examples of answers that demonstrate less versus more knowledge, not just note that we are scoring improvement in knowledge. Following pedagogical principles of repetition and recall, more review of key facts and concepts, like at the start of each training session as well as in Facebook FD group messages, and asking trainees to recall the knowledge in discussion, may also help enrich and cement their learning.

For evaluation of Part 2 storytelling, there are a number of specific ways we can improve the conditions under which our evaluators score to reduce the risk of bias, help them evaluate, and avoid trainee-score confusion. First, we can remind the evaluators of each row in which they enter their scores as they proceed through viewing and scoring stories. Second, when we are recording the trainees’ pre- and post-training stories via Zoom, we can set the visuals to viewer rather than gallery view so that our scorers can more clearly see and evaluate the trainees’ physical performance (e.g., facial expressions, body movements, use of props). Third, we can more consistently ensure that subtitles are provided on all those stories where the storyteller has a thick accent or bad internet connection that can impede comprehension. Fourth, we should be mindful of the order in which our evaluators see and score the stories as this may bias them to score higher or lower depending on, for example, whether they first view a well-told story or a poorly-told story.

Thus, overall, the trainees improved modestly in their knowledge of citizen diplomacy and global family life, and considerably in their skill at storytelling. There are a number of specific ways Learning Life can improve the training to boost and cement learning, and the evaluation to clarify as well as reduce scorer bias and confusion.

“The FD training made me a better storyteller. I now know how to more effectively structure and present a story, and that enables me to advocate more effectively for myself, my family, and the issues we care about.” – Nusrat Jahan Nipa, Bangladesh

“The FD training made me a better storyteller. I now know how to more effectively structure and present a story, and that enables me to advocate more effectively for myself, my family, and the issues we care about.” – Nusrat Jahan Nipa, Bangladesh

Acknowledgements

Learning Life is grateful to our trainees — Chirunim Agi-Otto, Aaron Akomea, Tenille Archie, Gustavo Carvajal, Ittie Chaunzar, Atenkeng Cynthia, Quan Chao Fan, Mulbah Isaac Flomo, Belle Gjeloshi, Esma Gumberidze, Lekshmi Lucky, Tadiwa Mudede, Nusrat Jahan Nipa, Sami Noman, Justice Umesi Onyekachi, Leroy Quoi, Mohammad Siavash, Chloe Terani, and Joe Toles — for their participation in the inaugural year of our FD training.

We thank our summer and fall interns — Anna Benson, Sarah DeCaro-Rincon, Janice Dias, Jenalyn Dizon, Yas El Argoubi, Fatima Elescano, Allie Hechmer, Ninah Henderson, Chanel Leonard, Mae Long, Sarah McInnis, Anya Neumeister, Maryam Pate, Alexandra Ravano, Emma Tomaszewski, Avanti Tulpule — for supporting our trainees.

We are also grateful to our trainers — Denise Bodman, Bethany Van Vleet, Sangeetha Madhavan, Andreas Fulda, Lisette Alvarez and Ben Yavitz — for effectively teaching our FDs about family, diplomacy, and storytelling. As well, thanks to our training evaluation volunteers — Peter Amponsah, Chelsea Dicus, Ruya Gokhan, Darrell Irwin, Cindy Mah, Zainab Mahdi, Arisa Oshiro, Edward Taylor, John Vaughan, Stacey Zlotnick, as well as interns Allie Hechmer. Ninah Henderson, Chanel Leonard, and Anya Neumeister — for their scoring of the trainees’ knowledge and storytelling ability.

Last but far from least, we thank all our donors who help support our Family Diplomacy Initiative, with special appreciation to Michael Brown, Nick Burton, Khadija Hashemi, Ana, Francois and Suzanne Lachelier, Sherry Mueller, Bill Schneider, Joe Toles and some anonymous donors for their generous financial support.

“Family Diplomacy is an eye opener to the world of diplomacy. We all know problems that affect our families, societies, and by extension humanity globally. But for the FD intervention, I couldn’t structure, tailor and share those issues to attract attention.” –Aaron Akomea, Ghana

“Family Diplomacy is an eye opener to the world of diplomacy. We all know problems that affect our families, societies, and by extension humanity globally. But for the FD intervention, I couldn’t structure, tailor and share those issues to attract attention.” –Aaron Akomea, Ghana